Overview

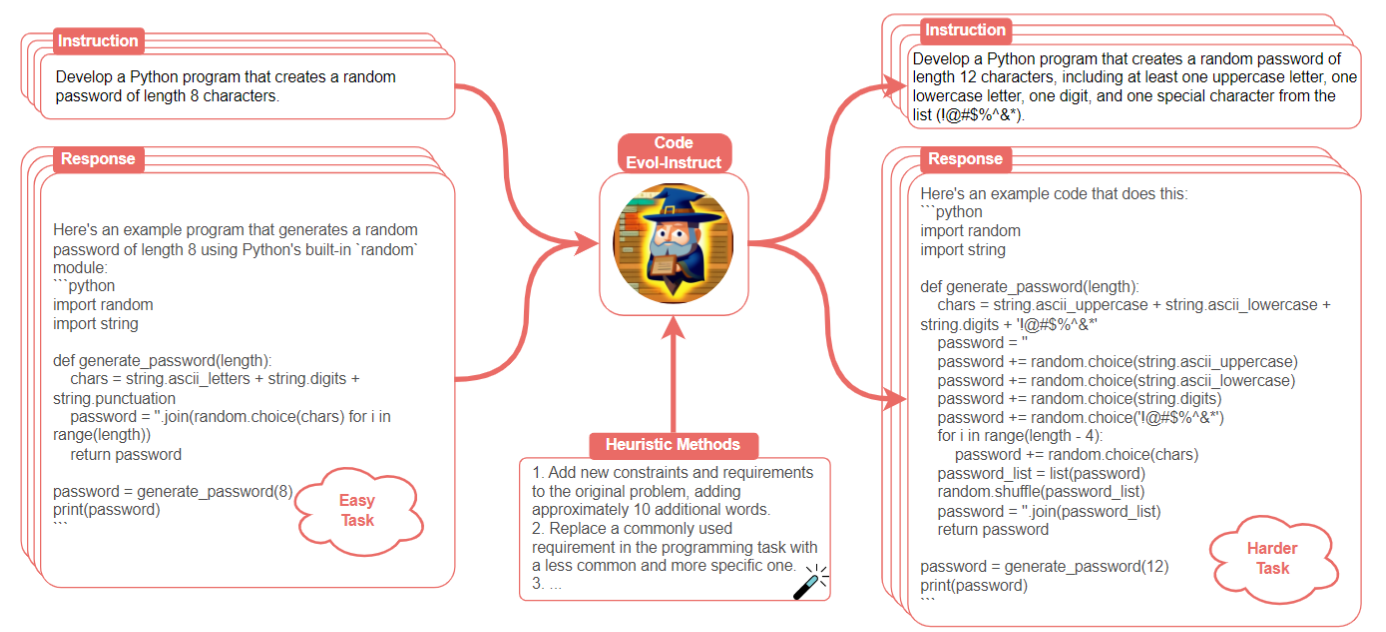

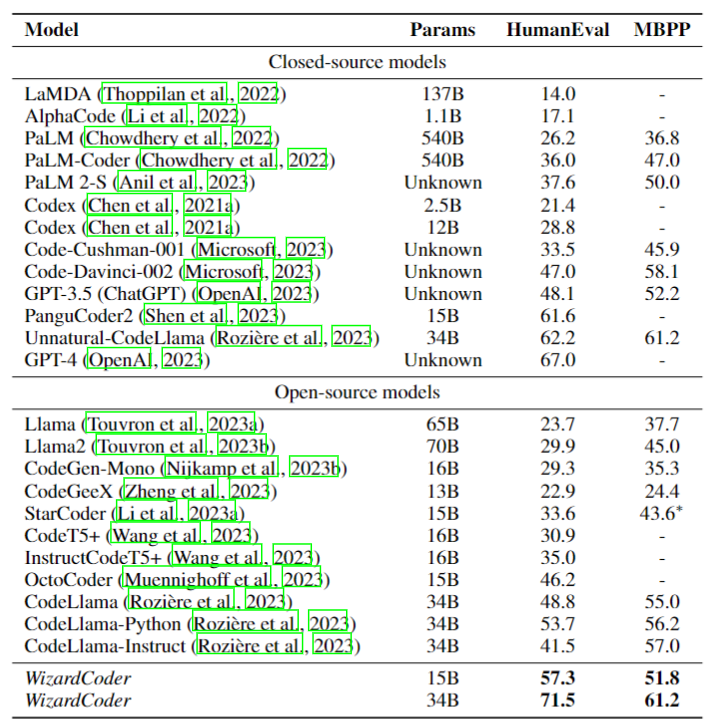

Code Large Language Models (Code LLMs), such as StarCoder, have demonstrated remarkable performance in various code-related tasks. However, different from their counterparts in the general language modeling field, the technique of instruction fine-tuning remains relatively under-researched in this domain. In this paper, we present Code Evol-Instruct, a novel approach that adapts the Evol-Instruct method to the realm of code, enhancing Code LLMs to create novel models WizardCoder. Through comprehensive experiments on five prominent code generation benchmarks, namely HumanEval, HumanEval+, MBPP, DS-1000, and MultiPL-E, our models showcase outstanding performance. They consistently outperform all other open-source Code LLMs by a significant margin. Remarkably, WizardCoder 15B even surpasses the well-known closed-source LLMs, including Anthropic's Claude and Google's Bard, on the HumanEval and HumanEval+ benchmarks. Additionally, WizardCoder 34B not only achieves a HumanEval score comparable to GPT3.5 (ChatGPT) but also surpasses it on the HumanEval+ benchmark. Furthermore, our preliminary exploration highlights the pivotal role of instruction complexity in achieving exceptional coding performance.

@article{luo2023wizardcoder,

title={WizardCoder: Empowering Code Large Language Models with Evol-Instruct},

author={Luo, Ziyang and Xu, Can and Zhao, Pu and Sun, Qingfeng and Geng, Xiubo and Hu, Wenxiang and Tao, Chongyang and Ma, Jing and Lin, Qingwei and Jiang, Daxin},

journal={arXiv preprint arXiv:2306.08568},

year={2023}

}